In order to find our way in the world, we classify it into concepts, such as “telephone.” Until now, it was unclear how the brain retrieves these when we only encounter the word and don’t perceive the objects directly. Scientists at the German MPI CBS have now developed a model of how the brain processes abstract knowledge. They found that depending on which features one concentrates on, the corresponding brain regions go into action.

To understand the world, we arrange individual objects, people, and events into different categories or concepts. Concepts such as “the telephone'” consist primarily of visible features, i.e., shape and color, and sounds, such as ringing. In addition, there are actions, i.e., how we use a telephone.

However, the concept of telephone does not only arise in the brain when we have a telephone in front of us. It also appears when the term is merely mentioned. If we read the word “telephone,” our brain also calls up the concept of telephone. The same regions in the brain are activated that would be activated if we actually saw, heard, or used a telephone. The brain thus seems to simulate the characteristics of a telephone when its name alone is mentioned.

Until now, however, it was unclear, depending on the situation, whether the entire concept of a telephone is called up or only individual features such as sounds or actions and whether only the brain areas that process the respective feature become active. So, when we think of a telephone, do we always think of all its features or only the part that is needed at the moment? Do we retrieve our sound knowledge when a phone rings, but our action knowledge when we use it?

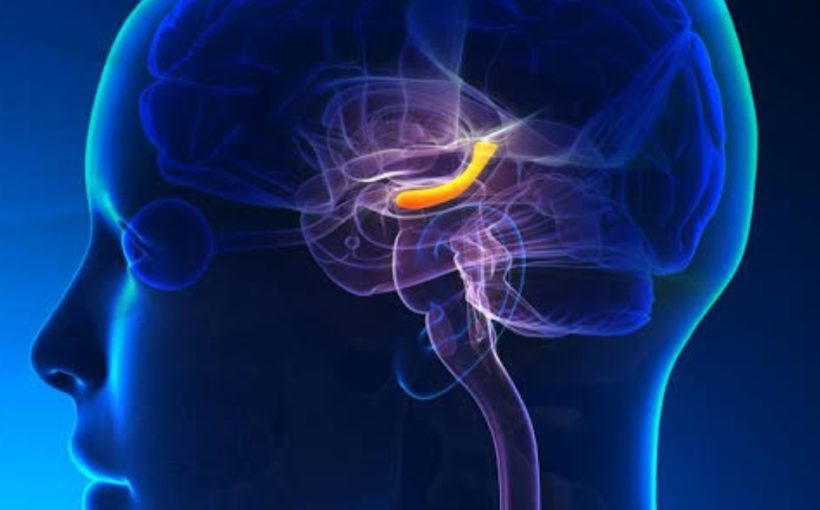

Researchers at the Max Planck Institute for Human Cognitive and Brain Sciences in Leipzig have now found the answer: It depends on the situation. If, for example, the study participants thought of the sounds associated with the word “telephone,” the corresponding auditory areas in the cerebral cortex were activated, which are also activated during actual hearing. When thinking about using a telephone, the somatomotor areas that underlie the involved movements came into action.

In addition to these sensory-dependent, so-called modality-specific areas, it was found that there are areas that process both sounds and actions together. One of these so-called multimodal areas is the left inferior parietal lobule (IPL). It became active when both features were requested.

The researchers also learned that in addition to characteristics based on sensory impressions and actions, there must be other criteria by which we understand and classify terms. This became apparent when the participants were only asked to distinguish between real and invented words. Here, a region that was not active for actions or sounds kicked in: the so-called anterior temporal lobe (ATL). The ATL therefore seems to process concepts abstractly or “amodally,” completely detached from sensory impressions.

From these findings, the scientists finally developed a hierarchical model to reflect how conceptual knowledge is represented in the human brain. According to this model, information is passed on from one hierarchical level to the next and at the same time becomes more abstract with each step. On the lowest level, therefore, are the modality-specific areas that process individual sensory impressions or actions. These transmit their information to the multimodal regions such as the IPL, which process several linked perceptions simultaneously, such as sounds and actions. The amodal ATL, which represents features detached from sensory impressions, operates at the highest level. The more abstract a feature, the higher the level at which it is processed and the further it is removed from actual sensory impressions.

“We thus show that our concepts of things, people, and events are composed, on the one hand, of the sensory impressions and actions associated with them; and on the other hand, of abstract symbol-like features,” explains Philipp Kuhnke, lead author of the study, which was published in the renowned journal Cerebral Cortex. “Which features are activated depends strongly on the respective situation or task,” added Kuhnke.

In a follow-up study in Cerebral Cortex, the researchers also found that modality-specific and multimodal regions work together in a situation-dependent manner when we retrieve conceptual features. The multimodal IPL interacted with auditory areas when retrieving sounds, and with somatomotor areas when retrieving actions. This showed that the interaction between modality-specific and multimodal regions determined the behavior of the study participants. The more these regions worked together, the more strongly the participants associated words with actions and sounds.

The scientists investigated these correlations with the help of various word tasks that the participants solved while lying in a functional magnetic resonance imaging (fMRI) scanner. Here, they had to decide whether they strongly associated the named object with sounds or actions. The researchers showed them words from four categories: 1) objects associated with sounds and actions, such as “guitar,” 2) objects associated with sounds but not with actions, such as “propeller,” 3) objects not associated with sounds but with actions, such as “napkin,” and 4) objects associated neither with sounds nor with actions, such as “satellite.”

Max Planck Society